ElasticSearch倒排索引&Analysis分词

目录

正排索引和倒排索引

书籍的目录也是生活中常见的正排索引。

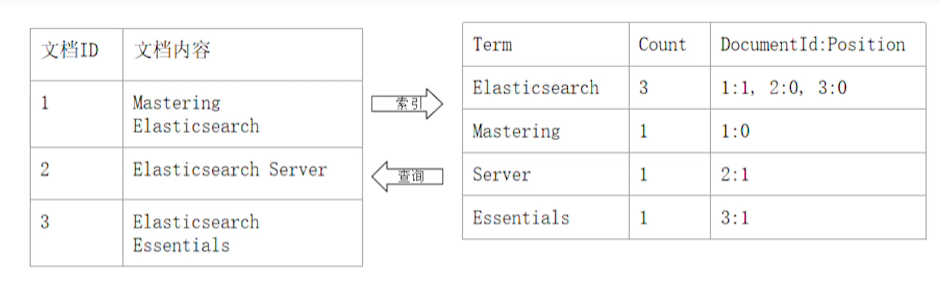

倒排索引核心组成

其主要包含两个部分:

- 单词词典(Term Dictionary)

记录所有的单词,记录单词到倒排列表的关联关系。

单词词典一般较大,可通过插入查询性能较高 B+树或哈希拉链法实。

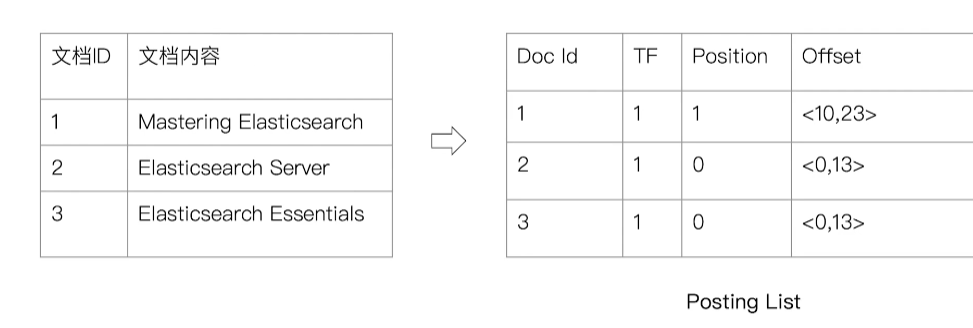

- 倒排列表(Posting List)

记录单词对应的文档组合,由倒排索引项组成。

其中倒排索引项(Posting):

- 文档 ID

- 词频 TF:记录单词在文档出现的次数,用于相关性评分

- 位置(Position):单词在文档中分词的位置,利于语句搜索

- 偏移(Offset):单词在文档中开始结束位置,实现高亮显示

Analysis & Analyzer

Analysis 即文本分析,是把全文转换一系列单词(term/token)的过程,也叫分词。而 Analysis 就是通过 Analyzer 实现的。

可使用 Elasticsearch 内置的分析器或者按需定制分析器。

除了在数据写入时转换词条,匹配 Query 语句时也需要用相同的分析器对查询语句进行分析。

Analyzer 的组成

分词器是专门处理分词的组件,Analyzer 由三部分组成:

- Character Filters:针对原始文本处理,如去除 html

- Tokenizer:按照规则,切分为单词

- Token Filter:将切分的单词进行加工,小写,删除 stopwords,增加同义词

一般按照顺序 Character Filters -> Tokenizer -> Token Filter 对语句进行拆分。

使用 _analyzer API

- 可指定 Analyzer 进行测试

- 指定索引的字段进行测试

- 自定义分词进行测试

|

|

Analyzer 类型

Standard Analyzer

- 默认分词器

- 按词切分

- 小写处理

组成如下:

- Tokenizer:Standard

- TokenFilters:Standard&LowerCase&Stop(默认关闭)

|

|

Simple Analyzer

- 按照非字母切分,非字母的都被去除

- 小写处理

组成如下:

- Tokenizer:LowerCase

|

|

Whitespace Analyzer

- 按照空格切分

组成如下:

- Tokenizer:Whitespace

Stop Analyzer

- 相比 Simple Analyzer 多了 stop filter

- 会把 the\a\is 等修饰性词语去除

组成如下:

- Tokenizer:Lowe Case

- TokenFilter:Stop

|

|

Keyword Analyzer

- 不分词,直接把输入当 term 输出

组成如下:

- Tokenizer:Keyword

Pattern Analyzer

- 通过正则表达式进行分词

- 默认是 \W+,非字符的符号进行分隔

组成如下:

- Tokenizer:Pattern

- TokenFilters:LowerCase&Stop

|

|

Language Analyzer

支持按不同国家语言进行分词。

|

|

中文分词

中文分词难点较多,如需要切分成词、没有像英文自然的空格作为分隔、不同上下文分词理解不同等。

中文分词使用较为常见的分词器 ICU Analyzer:

- 需要安装 plugin

Elasticsearch-plugin install analysis-icu

- 提供了 Unicode 的支持,更好的支持亚洲语言

组成如下:

- CharacterFilters:Normalization

- Tokenizer:ICU Tokenizer

- TokenFilters:Normalization&Folding&Collation&Transform

|

|

更多的中文分词器:

- IK

- 支持自定义词库,支持热更新分词字典

- https://github.com/medcl/elasticsearch-analysis-ik

- THULAC

- THU Lexucal Analyzer for Chinese,清华大学自然语言处理和社会人文计算实验室的一套中文分词器

- https://github.com/microbun/elasticsearch-thulac-plugin

|

|

参考

https://time.geekbang.org/course/intro/100030501?tab=catalog